DX12 is a bit of an... 'interesting' beast to utilise. It's still undergoing work, tweaks and developments and nVidia and AMD have their driver teams working full-throttle. Without a doubt, it is a significantly better architecture than DX11 and earlier. It has been re-factored (and largely rewritten) PROPERLY to take advantage of modern processor architectures (multi-threading, multi-cores, etc.) Earlier versions of the API didn't lend themselves well to multi-threading at all. DX11 tried to some extent but it was not a trivial thing to take advantage of and the headaches and pitfalls were often not worth the effort (Deferred Contexts in DX11 were a 'shadow state' system where GPU state could pulled in from secondary threads; it was a compromise that typically resulted in performance loss). Prior to DX11... forget it. Single-threading was the only way to go and God-help those who tried to use the API in a multi-threaded way. DX9 and DX10 were primarily designed to be single-threaded. When trying to use multi-threading then deadlock was never far away and you instantly saw a 50% performance drop in your game...

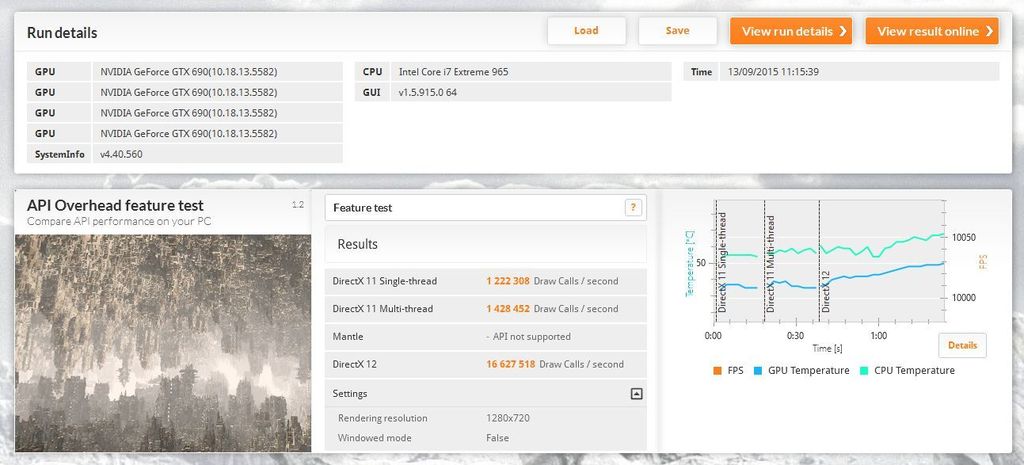

DX12 has been built properly; embracing the parallel processing nature and capabilities of modern GPUs and CPUs. By clever scheduling and ordering of rendering tasks, some significant gains can be made in terms of resulting performance boosts. The draw call example given above hints at how effective the new architecture could be. The draw call overhead has been massively reduced with DX12. Rendering engines using DX11 and earlier would try to minimise the number of draw calls made per frame by carefully managing and batching render geometry and managing the GPU global state. Once everything was in order a "draw call" would be made and the rendering request sent to the driver for processing. On CPU-bound systems this could introduce a stall / latency. With DX12, there are multiple threading compartments that can schedule compatible tasks a lot better, and often in parallel (simultaneously). As seen, an order of magnitude difference can be seen in the number of draw calls made. However, this can also be a bit misleading.

The draw call improvements will benefit the lesser-powered CPU system for sure (as seen in early reports and demos) whilst the effects will be considerably less evident on higher enthusiast spec machines. Draw call overhead alone isn't a suitable metric for measuring and comparing the general performance of one system against another. As a measure of latency, the draw call overhead is a poor metric to use. I think it could be some time before we see some real measure as to how much of a performance benefit DX12 will bring to the PC (and Xbox One) platforms. the development community need to be persuaded to make the move to DX12 (which some are doing and some are resisting).

The move to DX12 could be a blessing or a curse. Often, DX11 (and earlier) was termed as too high-level. The driver handled a lot of the operational complexities whilst the developer was free to get on and concentrate on other things. The driver handled memory page swapping and other lower-level tasks. This made development easier but didn't necessarily allow the developer to squeeze the very best performance out of the hardware. Whereas console developers were afforded the luxury of a somewhat lower-level abstraction to the underlying hardware, this was not mirrored in PC development circles. That's where DX12, Mantle and Vulkan come in (Mantle effectively having become deprecated). DX12 affords the developer a lower-level view of the hardware and the ability to write code to perform tasks that were effectively the domain of the graphics driver in previous API versions. As said, this can be a blessing and a curse. It allows a savvy developer to extract more performance from a system by carefully developing and tailoring their code to a specific hardware setup. But this runs the risk of reducing compatibility in terms of other hardware in the market. To what degree to developers go to introduce new and lower-level code paths to accommodate the plethora of hardware available to consumers? Effective and efficient low-level development takes time and money, something that isn't always readily available to game developers. It's a bit of a double-edged sword in some ways. It will be interesting to see developer reactions to the API as it begins to gather momentum and become more widespread. Personally I think it's (DX12) a very good thing and developers will have to eventually consider updating and evolving their engines to utilise DX12. But I also think it could take some time before we see the first real benefits of DX12 in new games; maybe as far as 18 months / 2 years from now (but don't hold me to that!)